diffray vs Fallom

Side-by-side comparison to help you choose the right AI tool.

diffray

Diffray's AI agents catch real bugs in your code, not just nitpicks.

Last updated: February 28, 2026

Fallom tracks every AI agent action and LLM call in real time for full observability.

Last updated: February 28, 2026

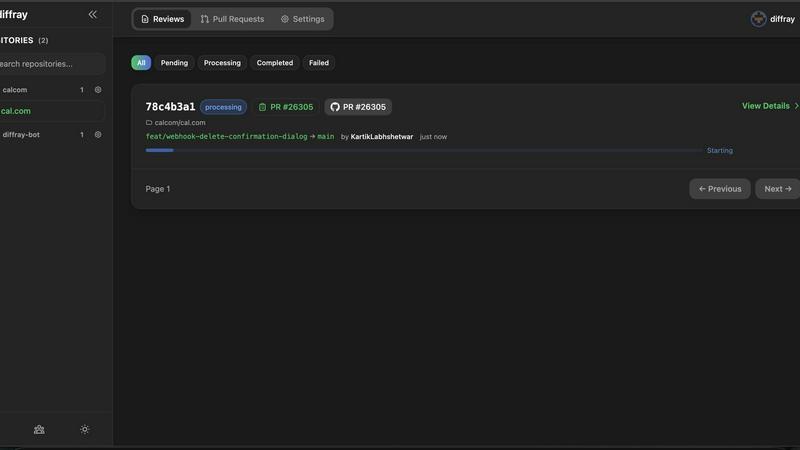

Visual Comparison

diffray

Fallom

Feature Comparison

diffray

Multi-Agent AI Architecture

diffray's core innovation is its team of over 30 specialized AI agents. Instead of one model attempting to be a jack-of-all-trades, each agent is a master in a specific domain, such as security, performance, or bug detection. This architecture allows for deep, parallel analysis of your code, ensuring feedback is expert-level and highly relevant. The system intelligently routes code sections to the appropriate agents, resulting in comprehensive coverage that a single model could never achieve.

Full-Repository Context Analysis

diffray moves beyond the limited view of a simple diff. It investigates your entire codebase to understand the full context of a change. This means it can identify how new code interacts with existing functions, spot inconsistencies with project-wide patterns, and detect deeper architectural issues. This context-aware review eliminates generic suggestions and provides insights that are truly specific to your project's structure and standards.

Drastic Reduction in False Positives

By leveraging expert agents and full-context analysis, diffray delivers remarkably precise feedback. It filters out the noise that plagues other AI review tools, achieving an 87% reduction in false positives. This allows developers to trust the platform's alerts and focus their energy on addressing genuine, critical issues rather than debating incorrect or irrelevant suggestions, streamlining the entire review workflow.

Seamless GitHub Integration

Designed for a frictionless developer experience, diffray integrates directly into your existing GitHub workflow. Setup is simple and requires minimal configuration. Once connected, it automatically reviews pull requests, posting comments directly on the relevant lines of code. This native integration means there's no need to switch contexts or learn a new interface; intelligent review becomes a natural part of your team's standard development process.

Fallom

Real-Time LLM Call Dashboard

Monitor every AI interaction live from a centralized, mobile-friendly dashboard. See prompts, model outputs, token counts, latency, and cost for each call in real-time. Click into any trace for a detailed breakdown, enabling instant debugging and performance monitoring without sifting through logs.

Granular Cost Attribution & Analytics

Gain full financial transparency over your AI operations. Fallom automatically tracks and attributes spend per model, per user, per team, or per customer. Visualize costs with clear charts and reports, enabling accurate budgeting, chargeback, and optimization to control your LLM expenditure effectively.

Compliance-Ready Audit Trails

Meet stringent regulatory requirements like the EU AI Act, SOC 2, and GDPR with built-in compliance features. Fallom provides immutable, complete audit trails of every LLM interaction, including input/output logging, model versioning, and user consent tracking, all essential for enterprise deployments.

Advanced Debugging with Timing Waterfalls & Tool Visibility

Debug complex, multi-step agent workflows with precision. Visualize latency bottlenecks using timing waterfall diagrams that break down each step of an agent's execution. Inspect every function or tool call your agent makes, including arguments and results, to quickly identify and resolve issues.

Use Cases

diffray

Accelerating Pull Request Reviews

Development teams use diffray to drastically cut down PR review time. By providing immediate, high-quality AI feedback as soon as a PR is opened, it gives reviewers a head start and authors actionable items to address early. Teams report reducing average weekly PR review time from 45 minutes to just 12 minutes, allowing them to merge code faster and maintain a rapid development pace without bottlenecks.

Enforcing Code Quality & Best Practices

diffray acts as a consistent, automated guardian of code quality. Its specialized agents continuously check for adherence to best practices, architectural patterns, and style guides across every pull request. This is especially valuable for growing teams or open-source projects, ensuring all contributions maintain a high standard and reducing the stylistic and structural debates that often slow down human reviewers.

Proactive Security & Vulnerability Detection

Security teams and developers leverage diffray's dedicated security agents to catch vulnerabilities early in the development cycle. By analyzing code changes in the context of the entire application, it can identify potential security flaws, insecure dependencies, and common vulnerability patterns before they reach production, shifting security left and making applications more robust by design.

Onboarding New Team Members

New engineers can use diffray as an always-available mentor. As they submit their first pull requests, diffray provides instant, educational feedback on code structure, project-specific patterns, and potential improvements. This accelerates the onboarding process, helps new hires align with team standards quickly, and reduces the initial review burden on senior developers.

Fallom

Debugging Complex Agentic Workflows

When your multi-step AI agent fails or behaves unexpectedly, Fallom provides the clarity needed. Trace the entire execution path, view the exact inputs and outputs at each step (LLM calls, tool calls), and analyze timing waterfalls to pinpoint exactly where and why the failure occurred, drastically reducing mean time to resolution.

Managing and Optimizing LLM Costs

Gain control over unpredictable AI spending. Use Fallom's cost attribution dashboards to see which models, teams, or features are driving your bill. Identify inefficiencies, compare cost-performance of different models through A/B testing, and set up alerts for anomalous spend to maintain budget predictability.

Ensuring Compliance and Audit Readiness

For teams in regulated industries, Fallom automates the creation of a verifiable audit trail. Document every AI decision for compliance reviews, demonstrate user consent logging, and utilize privacy modes for sensitive data. This ensures your LLM applications meet legal and internal governance standards from day one.

Monitoring Production Reliability and Performance

Proactively ensure your AI features are reliable and fast. Set up real-time monitors on key metrics like latency, error rates, and token usage. Get alerted to performance degradation or model outages immediately, allowing you to maintain a high-quality user experience and trust in your AI-powered products.

Overview

About diffray

diffray is a revolutionary AI-powered code review platform designed for modern development teams who value speed without sacrificing quality. It cuts through the clutter of generic AI feedback by deploying a sophisticated multi-agent architecture. Unlike tools that rely on a single AI model, diffray utilizes over 30 specialized AI agents, each an expert in a specific domain like security vulnerabilities, performance bottlenecks, bug patterns, code best practices, and even SEO considerations. This targeted, investigative approach allows diffray to deeply understand the context of your changes by examining your entire codebase, not just the lines in the pull request diff. The result is precise, actionable insights that are directly relevant to your project. For developers, this means a transformative shift from sifting through speculative, noisy comments to receiving focused, context-aware reviews. Teams using diffray report a dramatic 87% reduction in false positives and a 3x increase in catching critical, real issues early. By integrating seamlessly with GitHub and offering a simple setup, diffray empowers developers to ship higher-quality code faster, turning lengthy review cycles into efficient, high-signal conversations.

About Fallom

Fallom is the AI-native observability platform built for teams deploying production-grade LLM applications and autonomous agents. It transforms the opaque "black box" of AI interactions into a transparent, actionable window, giving developers, AI engineers, and platform teams complete, real-time visibility. The core challenge it solves is the inability to effectively monitor, debug, and manage the cost, performance, and reliability of complex LLM workloads at scale. Fallom's primary value proposition is delivering enterprise-ready observability in minutes through a single, OpenTelemetry-native SDK. With Fallom, you can see every granular detail of an LLM call—including prompts, outputs, tool calls, token usage, latency, and cost—all seamlessly correlated with session and user context. This empowers teams to swiftly debug intricate agentic workflows, accurately attribute spend across models and teams, ensure compliance with detailed audit trails, and maintain system reliability, all from an intuitive, mobile-friendly dashboard. Fallom ensures organizations can innovate and move fast with AI without flying blind, guaranteeing their applications are performant, cost-effective, and trustworthy from day one.

Frequently Asked Questions

diffray FAQ

How does diffray differ from other AI code review tools?

diffray fundamentally differs through its multi-agent architecture. Most tools use a single, general-purpose AI model, which often leads to generic and noisy feedback. diffray employs over 30 AI agents, each a specialist in areas like security, performance, or bugs. This, combined with its analysis of your full codebase context, allows it to provide precise, investigative reviews that dramatically reduce false positives and catch more critical issues.

What programming languages and frameworks does diffray support?

diffray is designed to be versatile and supports a wide range of popular programming languages and frameworks. Its specialized agents are trained to understand the nuances and best practices of different tech stacks. For the most current and detailed list of supported languages, please refer to the official diffray documentation on their website.

Is my code secure with diffray?

Yes. diffray takes code security and privacy seriously. The platform is built with enterprise-grade security practices. You can review their detailed privacy policy and security documentation on their website, which outlines their data handling, encryption standards, and compliance measures to ensure your intellectual property remains protected.

How quickly can my team get started with diffray?

Getting started is incredibly fast and simple. The primary step is integrating diffray with your GitHub organization or repository, a process that takes just a few clicks. There is no complex infrastructure to set up or lengthy configuration required. Once connected, diffray will immediately begin providing intelligent reviews on new pull requests, delivering value from day one.

Fallom FAQ

How quickly can I integrate Fallom into my existing application?

Integration is designed to be incredibly fast. Using the OpenTelemetry-native SDK, you can typically start sending traces and seeing data in the Fallom dashboard in under 5 minutes. There's no need to change your LLM provider or application architecture.

Does Fallom support all major LLM providers and frameworks?

Yes, Fallom is provider-agnostic. Its single SDK works with every major provider like OpenAI, Anthropic, Google Gemini, and open-source models. It also integrates with popular agent frameworks (LangChain, LlamaIndex) and is 100% compatible with the OpenTelemetry standard, ensuring zero vendor lock-in.

How does Fallom handle sensitive or private user data?

Fallom offers robust privacy controls for sensitive deployments. You can enable "Privacy Mode" to disable full content capture, logging only metadata like token counts and latency. Configurable content redaction and per-environment settings allow you to balance observability needs with data protection and compliance requirements.

Can I use Fallom for A/B testing different models or prompts?

Absolutely. Fallom includes features specifically for experimentation. You can split traffic between different models (e.g., GPT-4 vs. Claude) or different versions of your prompts stored in the Prompt Store. Compare their performance, cost, and quality metrics side-by-side in the dashboard to make data-driven decisions before full rollout.

Alternatives

diffray Alternatives

diffray is a specialized AI-powered code review platform in the development tools category. It uses a team of over 30 expert AI agents to catch real bugs and security issues by analyzing your full codebase, not just the changed lines. This approach dramatically reduces false positives and helps developers ship higher-quality code faster. Users often explore alternatives for various reasons. These can include budget constraints, the need for different feature sets, or specific integration requirements with their existing tech stack. Some teams might also be looking for a different approach to AI assistance or a platform that aligns with their team's specific workflow preferences. When evaluating other tools, focus on what matters most for your team's productivity. Key considerations include the accuracy of feedback and the rate of false positives, the depth of code analysis beyond simple line changes, the specialization of the AI in critical areas like security and performance, and how seamlessly the tool integrates into your existing developer workflow without becoming a distraction.

Fallom Alternatives

Fallom is an AI-native observability platform designed for teams running production LLM applications and autonomous agents. It provides real-time visibility into every AI interaction, helping developers monitor, debug, and manage costs. Users often explore alternatives for various reasons, such as budget constraints, specific feature needs like deeper integration with certain cloud providers, or a preference for a different deployment model like self-hosted solutions. When evaluating other options, key factors to consider include the depth of tracing for agentic workflows, real-time cost tracking capabilities, ease of integration with your existing stack, and the overall user experience for debugging and monitoring at scale.