Agenta vs diffray

Side-by-side comparison to help you choose the right AI tool.

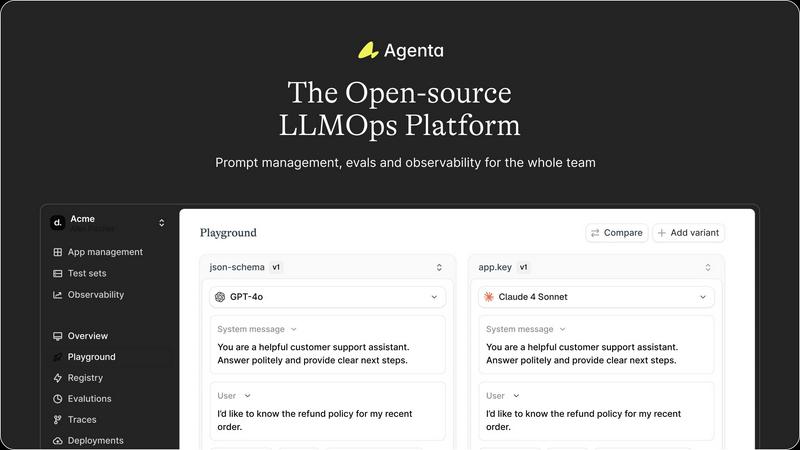

Agenta is the open-source platform for teams to build and manage reliable LLM apps together.

Last updated: March 1, 2026

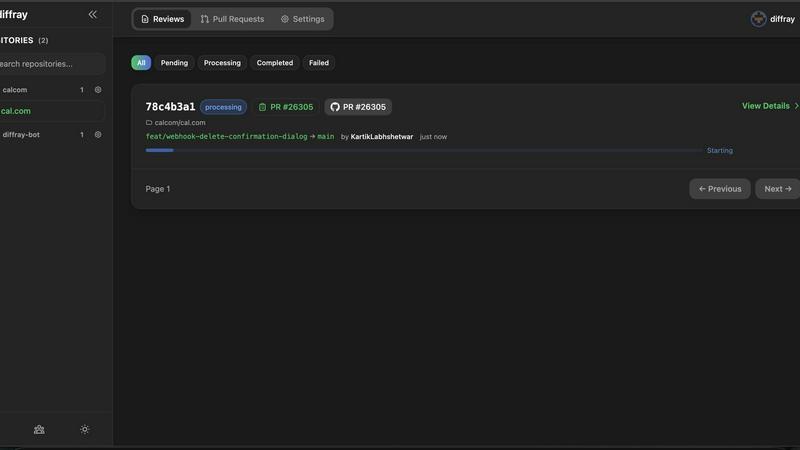

diffray

Diffray's AI agents catch real bugs in your code, not just nitpicks.

Last updated: February 28, 2026

Visual Comparison

Agenta

diffray

Feature Comparison

Agenta

Unified Playground & Prompt Management

Agenta provides a central playground where teams can experiment with, compare, and version-control prompts and models side-by-side in real-time. This creates a single source of truth, ending the chaos of prompts scattered across different tools. You get complete version history for every change, enabling seamless rollbacks and clear audit trails. The platform is model-agnostic, allowing you to integrate and test models from any provider without being locked into a single vendor's ecosystem.

Systematic Evaluation & Testing

Move beyond gut feelings with Agenta's robust evaluation framework. It enables you to create a systematic process for running experiments, tracking results, and validating every change before it ships. You can integrate any evaluator, including LLM-as-a-judge, custom code, or built-in metrics. Crucially, you can evaluate the full trace of an agent's reasoning, not just the final output, and incorporate human feedback from domain experts directly into the evaluation workflow for comprehensive validation.

Production Observability & Debugging

Gain deep visibility into your live LLM applications. Agenta traces every production request, allowing you to pinpoint exact failure points when issues arise. You can annotate traces with your team or gather direct feedback from end-users. A powerful feature lets you turn any problematic trace into a test case with a single click, closing the feedback loop between production and development. Monitor performance and detect regressions automatically with live, online evaluations.

Collaborative Workflow for Teams

Agenta breaks down silos by providing a unified workspace for all stakeholders. It offers a safe, no-code UI for domain experts to edit and experiment with prompts. Product managers and experts can run evaluations and compare experiments directly from the interface. The platform ensures full parity between its API and UI, allowing both programmatic and manual workflows to integrate seamlessly into one central hub, fostering true collaboration.

diffray

Multi-Agent AI Architecture

diffray's core innovation is its team of over 30 specialized AI agents. Instead of one model attempting to be a jack-of-all-trades, each agent is a master in a specific domain, such as security, performance, or bug detection. This architecture allows for deep, parallel analysis of your code, ensuring feedback is expert-level and highly relevant. The system intelligently routes code sections to the appropriate agents, resulting in comprehensive coverage that a single model could never achieve.

Full-Repository Context Analysis

diffray moves beyond the limited view of a simple diff. It investigates your entire codebase to understand the full context of a change. This means it can identify how new code interacts with existing functions, spot inconsistencies with project-wide patterns, and detect deeper architectural issues. This context-aware review eliminates generic suggestions and provides insights that are truly specific to your project's structure and standards.

Drastic Reduction in False Positives

By leveraging expert agents and full-context analysis, diffray delivers remarkably precise feedback. It filters out the noise that plagues other AI review tools, achieving an 87% reduction in false positives. This allows developers to trust the platform's alerts and focus their energy on addressing genuine, critical issues rather than debating incorrect or irrelevant suggestions, streamlining the entire review workflow.

Seamless GitHub Integration

Designed for a frictionless developer experience, diffray integrates directly into your existing GitHub workflow. Setup is simple and requires minimal configuration. Once connected, it automatically reviews pull requests, posting comments directly on the relevant lines of code. This native integration means there's no need to switch contexts or learn a new interface; intelligent review becomes a natural part of your team's standard development process.

Use Cases

Agenta

Accelerating Agent & Chatbot Development

Teams building conversational AI, customer support agents, or complex multi-step AI agents use Agenta to manage the intricate prompt chains and reasoning steps. The unified playground allows for rapid iteration on system prompts and tools, while full-trace evaluation ensures each step in the agent's logic is performing correctly before deployment, leading to more reliable and effective autonomous systems.

Streamlining LLM-Powered Feature Rollouts

When product teams need to integrate LLM features (like content summarization, classification, or generation) into an existing application, Agenta provides the controlled environment to test and evaluate these features. PMs can collaborate with engineers to run A/B tests on different prompts or models, using systematic evaluations to gather evidence on what works best before a full production release.

Managing Enterprise Prompt Portfolios

Large organizations with multiple teams deploying various LLM applications use Agenta as a central governance platform. It prevents duplication of effort and maintains consistency by offering a centralized repository for all prompts and their versions. Subject matter experts across different departments can contribute to and evaluate prompts relevant to their domain within a secure, managed environment.

Debugging and Improving Live AI Systems

When an LLM application in production exhibits unexpected behavior or a drop in performance, engineers use Agenta's observability features to diagnose the issue. By examining detailed traces, they can isolate the failure to a specific prompt, model call, or data input. They can then save the error as a test case, debug it in the playground, and validate the fix through evaluation, ensuring the same error does not reoccur.

diffray

Accelerating Pull Request Reviews

Development teams use diffray to drastically cut down PR review time. By providing immediate, high-quality AI feedback as soon as a PR is opened, it gives reviewers a head start and authors actionable items to address early. Teams report reducing average weekly PR review time from 45 minutes to just 12 minutes, allowing them to merge code faster and maintain a rapid development pace without bottlenecks.

Enforcing Code Quality & Best Practices

diffray acts as a consistent, automated guardian of code quality. Its specialized agents continuously check for adherence to best practices, architectural patterns, and style guides across every pull request. This is especially valuable for growing teams or open-source projects, ensuring all contributions maintain a high standard and reducing the stylistic and structural debates that often slow down human reviewers.

Proactive Security & Vulnerability Detection

Security teams and developers leverage diffray's dedicated security agents to catch vulnerabilities early in the development cycle. By analyzing code changes in the context of the entire application, it can identify potential security flaws, insecure dependencies, and common vulnerability patterns before they reach production, shifting security left and making applications more robust by design.

Onboarding New Team Members

New engineers can use diffray as an always-available mentor. As they submit their first pull requests, diffray provides instant, educational feedback on code structure, project-specific patterns, and potential improvements. This accelerates the onboarding process, helps new hires align with team standards quickly, and reduces the initial review burden on senior developers.

Overview

About Agenta

Agenta is the open-source LLMOps platform designed to transform how AI teams build and ship reliable applications powered by large language models. It directly tackles the core challenge of LLM unpredictability by replacing scattered, chaotic workflows with a centralized, collaborative environment for the entire development lifecycle. Built for cross-functional teams, Agenta brings developers, product managers, and subject matter experts into a single, intuitive workflow. It eliminates the frustration of prompts lost in emails and spreadsheets and debugging that feels like guesswork. The platform's core value lies in seamlessly integrating the three critical pillars of modern LLM development: prompt management, systematic evaluation, and production observability. This unified approach allows teams to experiment rapidly, validate every change with concrete evidence, and efficiently debug issues, dramatically accelerating time-to-production while reducing risk. As an open-source and model-agnostic solution, Agenta provides the flexibility to use any model or framework, preventing vendor lock-in and empowering teams to choose the best tools for their specific application needs.

About diffray

diffray is a revolutionary AI-powered code review platform designed for modern development teams who value speed without sacrificing quality. It cuts through the clutter of generic AI feedback by deploying a sophisticated multi-agent architecture. Unlike tools that rely on a single AI model, diffray utilizes over 30 specialized AI agents, each an expert in a specific domain like security vulnerabilities, performance bottlenecks, bug patterns, code best practices, and even SEO considerations. This targeted, investigative approach allows diffray to deeply understand the context of your changes by examining your entire codebase, not just the lines in the pull request diff. The result is precise, actionable insights that are directly relevant to your project. For developers, this means a transformative shift from sifting through speculative, noisy comments to receiving focused, context-aware reviews. Teams using diffray report a dramatic 87% reduction in false positives and a 3x increase in catching critical, real issues early. By integrating seamlessly with GitHub and offering a simple setup, diffray empowers developers to ship higher-quality code faster, turning lengthy review cycles into efficient, high-signal conversations.

Frequently Asked Questions

Agenta FAQ

Is Agenta really open-source?

Yes, Agenta is fully open-source. You can dive into the code, self-host the platform, and contribute to its development on GitHub. This model provides maximum flexibility, prevents vendor lock-in, and allows teams to customize the platform to fit their specific infrastructure and security requirements.

How does Agenta handle collaboration between technical and non-technical roles?

Agenta is built specifically for cross-functional collaboration. It provides a user-friendly, no-code web interface that allows product managers and domain experts to safely edit prompts, run evaluations, and compare experiment results without writing any code. This bridges the gap between teams, ensuring everyone works from the same centralized data and workflow.

Can I use Agenta with my existing LLM framework and model providers?

Absolutely. Agenta is designed to be model-agnostic and framework-agnostic. It seamlessly integrates with popular frameworks like LangChain and LlamaIndex, and can work with models from any provider, including OpenAI, Anthropic, Google, and open-source models from Hugging Face. You bring your own models and APIs.

What is the difference between evaluation and observability in Agenta?

Evaluation in Agenta refers to the systematic testing and scoring of prompts and models during development, typically on curated test datasets, to validate performance before deployment. Observability, on the other hand, is about monitoring the live, production application. It involves tracing real-user requests, debugging issues as they happen, and using that production data to create new tests, closing the loop between live ops and development.

diffray FAQ

How does diffray differ from other AI code review tools?

diffray fundamentally differs through its multi-agent architecture. Most tools use a single, general-purpose AI model, which often leads to generic and noisy feedback. diffray employs over 30 AI agents, each a specialist in areas like security, performance, or bugs. This, combined with its analysis of your full codebase context, allows it to provide precise, investigative reviews that dramatically reduce false positives and catch more critical issues.

What programming languages and frameworks does diffray support?

diffray is designed to be versatile and supports a wide range of popular programming languages and frameworks. Its specialized agents are trained to understand the nuances and best practices of different tech stacks. For the most current and detailed list of supported languages, please refer to the official diffray documentation on their website.

Is my code secure with diffray?

Yes. diffray takes code security and privacy seriously. The platform is built with enterprise-grade security practices. You can review their detailed privacy policy and security documentation on their website, which outlines their data handling, encryption standards, and compliance measures to ensure your intellectual property remains protected.

How quickly can my team get started with diffray?

Getting started is incredibly fast and simple. The primary step is integrating diffray with your GitHub organization or repository, a process that takes just a few clicks. There is no complex infrastructure to set up or lengthy configuration required. Once connected, diffray will immediately begin providing intelligent reviews on new pull requests, delivering value from day one.

Alternatives

Agenta Alternatives

Agenta is an open-source LLMOps platform designed for teams building applications powered by large language models. It centralizes the entire development workflow, from prompt experimentation to evaluation and production monitoring, into a single collaborative environment. Users often explore alternatives for various reasons. Some teams might have specific budget constraints or require a fully managed, cloud-hosted solution. Others might need deeper integrations with their existing tech stack, or their use case might be simpler, focusing on just one aspect like prompt management without the need for a full platform. When evaluating other tools, consider your team's primary need. Look for solutions that address the core challenges of LLM development: managing prompt versions, systematically testing changes, and monitoring live applications. The right fit should streamline your workflow, support collaboration, and provide the observability needed to deploy reliable LLM apps with confidence.

diffray Alternatives

diffray is a specialized AI-powered code review platform in the development tools category. It uses a team of over 30 expert AI agents to catch real bugs and security issues by analyzing your full codebase, not just the changed lines. This approach dramatically reduces false positives and helps developers ship higher-quality code faster. Users often explore alternatives for various reasons. These can include budget constraints, the need for different feature sets, or specific integration requirements with their existing tech stack. Some teams might also be looking for a different approach to AI assistance or a platform that aligns with their team's specific workflow preferences. When evaluating other tools, focus on what matters most for your team's productivity. Key considerations include the accuracy of feedback and the rate of false positives, the depth of code analysis beyond simple line changes, the specialization of the AI in critical areas like security and performance, and how seamlessly the tool integrates into your existing developer workflow without becoming a distraction.