Agenta vs qtrl.ai

Side-by-side comparison to help you choose the right AI tool.

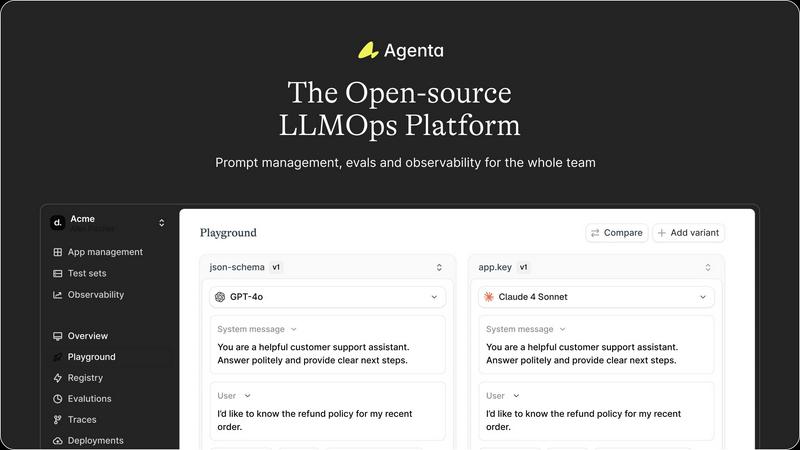

Agenta is the open-source platform for teams to build and manage reliable LLM apps together.

Last updated: March 1, 2026

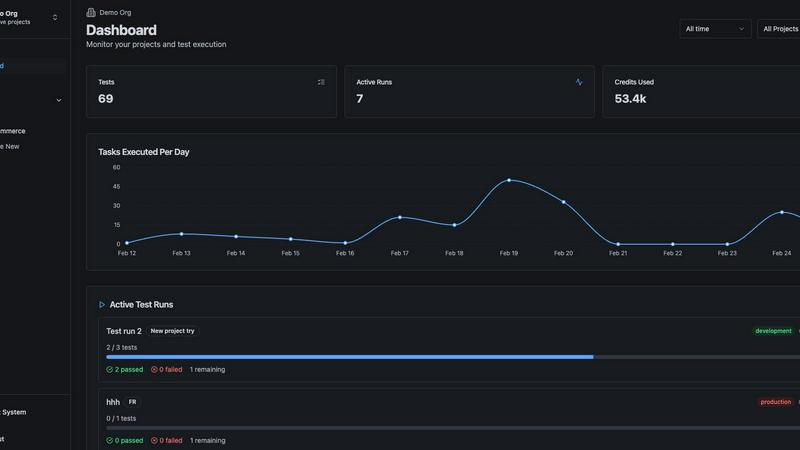

qtrl.ai

qtrl.ai empowers QA teams to scale testing with AI while maintaining control, governance, and seamless integration.

Last updated: March 4, 2026

Visual Comparison

Agenta

qtrl.ai

Feature Comparison

Agenta

Unified Playground & Prompt Management

Agenta provides a central playground where teams can experiment with, compare, and version-control prompts and models side-by-side in real-time. This creates a single source of truth, ending the chaos of prompts scattered across different tools. You get complete version history for every change, enabling seamless rollbacks and clear audit trails. The platform is model-agnostic, allowing you to integrate and test models from any provider without being locked into a single vendor's ecosystem.

Systematic Evaluation & Testing

Move beyond gut feelings with Agenta's robust evaluation framework. It enables you to create a systematic process for running experiments, tracking results, and validating every change before it ships. You can integrate any evaluator, including LLM-as-a-judge, custom code, or built-in metrics. Crucially, you can evaluate the full trace of an agent's reasoning, not just the final output, and incorporate human feedback from domain experts directly into the evaluation workflow for comprehensive validation.

Production Observability & Debugging

Gain deep visibility into your live LLM applications. Agenta traces every production request, allowing you to pinpoint exact failure points when issues arise. You can annotate traces with your team or gather direct feedback from end-users. A powerful feature lets you turn any problematic trace into a test case with a single click, closing the feedback loop between production and development. Monitor performance and detect regressions automatically with live, online evaluations.

Collaborative Workflow for Teams

Agenta breaks down silos by providing a unified workspace for all stakeholders. It offers a safe, no-code UI for domain experts to edit and experiment with prompts. Product managers and experts can run evaluations and compare experiments directly from the interface. The platform ensures full parity between its API and UI, allowing both programmatic and manual workflows to integrate seamlessly into one central hub, fostering true collaboration.

qtrl.ai

Autonomous QA Agents

qtrl.ai's Autonomous QA Agents execute instructions on demand or continuously across various environments. They adhere to user-defined rules to ensure compliance and reliability, running real browser executions rather than simulations.

Enterprise-Grade Test Management

With centralized management of test cases, plans, and runs, qtrl.ai offers full traceability and audit trails. This feature supports both manual and automated workflows, ensuring that compliance and auditability are built-in, which is crucial for enterprise environments.

Progressive Automation

Begin with human-written test instructions and transition to AI-generated tests as your team becomes more comfortable. qtrl.ai intelligently suggests new tests based on coverage gaps, allowing teams to review, approve, and refine tests at every phase of development.

Adaptive Memory

qtrl.ai builds a living knowledge base of your application, learning from test executions and issues. This adaptive memory enhances context-aware test generation, becoming more effective with each interaction, which significantly improves overall testing efficiency.

Use Cases

Agenta

Accelerating Agent & Chatbot Development

Teams building conversational AI, customer support agents, or complex multi-step AI agents use Agenta to manage the intricate prompt chains and reasoning steps. The unified playground allows for rapid iteration on system prompts and tools, while full-trace evaluation ensures each step in the agent's logic is performing correctly before deployment, leading to more reliable and effective autonomous systems.

Streamlining LLM-Powered Feature Rollouts

When product teams need to integrate LLM features (like content summarization, classification, or generation) into an existing application, Agenta provides the controlled environment to test and evaluate these features. PMs can collaborate with engineers to run A/B tests on different prompts or models, using systematic evaluations to gather evidence on what works best before a full production release.

Managing Enterprise Prompt Portfolios

Large organizations with multiple teams deploying various LLM applications use Agenta as a central governance platform. It prevents duplication of effort and maintains consistency by offering a centralized repository for all prompts and their versions. Subject matter experts across different departments can contribute to and evaluate prompts relevant to their domain within a secure, managed environment.

Debugging and Improving Live AI Systems

When an LLM application in production exhibits unexpected behavior or a drop in performance, engineers use Agenta's observability features to diagnose the issue. By examining detailed traces, they can isolate the failure to a specific prompt, model call, or data input. They can then save the error as a test case, debug it in the playground, and validate the fix through evaluation, ensuring the same error does not reoccur.

qtrl.ai

Product-Led Engineering Teams

For product-led engineering teams, qtrl.ai streamlines the QA process by integrating test management and automation into one cohesive platform. This allows teams to focus on delivering high-quality features without sacrificing speed.

QA Teams Scaling Beyond Manual Testing

QA teams transitioning from manual testing can leverage qtrl.ai to automate their workflows progressively. This enables them to maintain oversight while reducing the time spent on repetitive tasks, leading to better resource allocation.

Companies Modernizing Legacy QA Workflows

Organizations modernizing their QA workflows can benefit from qtrl.ai's structured approach to test management and automation. This facilitates smoother transitions from outdated practices to modern, efficient QA strategies that enhance product quality.

Enterprises Requiring Governance and Traceability

Enterprises with strict compliance requirements will find qtrl.ai's full traceability and audit trails invaluable. The platform is designed to meet governance needs, ensuring that every testing phase is documented and compliant with industry standards.

Overview

About Agenta

Agenta is the open-source LLMOps platform designed to transform how AI teams build and ship reliable applications powered by large language models. It directly tackles the core challenge of LLM unpredictability by replacing scattered, chaotic workflows with a centralized, collaborative environment for the entire development lifecycle. Built for cross-functional teams, Agenta brings developers, product managers, and subject matter experts into a single, intuitive workflow. It eliminates the frustration of prompts lost in emails and spreadsheets and debugging that feels like guesswork. The platform's core value lies in seamlessly integrating the three critical pillars of modern LLM development: prompt management, systematic evaluation, and production observability. This unified approach allows teams to experiment rapidly, validate every change with concrete evidence, and efficiently debug issues, dramatically accelerating time-to-production while reducing risk. As an open-source and model-agnostic solution, Agenta provides the flexibility to use any model or framework, preventing vendor lock-in and empowering teams to choose the best tools for their specific application needs.

About qtrl.ai

qtrl.ai is an innovative quality assurance (QA) platform that empowers software teams to elevate their QA processes without compromising on control or governance. By merging robust test management capabilities with advanced AI automation, qtrl.ai serves as a centralized hub for organizing test cases, planning test runs, tracing requirements to coverage, and monitoring quality metrics through intuitive real-time dashboards. This comprehensive structure provides engineering leads and QA managers with clear insights into testing progress, pass rates, and potential risks. What sets qtrl.ai apart is its progressive AI layer, allowing teams to gradually implement intelligent automation. Starting with manual test management, teams can evolve to leverage autonomous agents that generate UI tests from simple English descriptions, adapt to application changes, and execute tests across various browsers and environments. This flexibility makes qtrl.ai ideal for product-driven engineering teams, QA departments moving past manual testing, organizations modernizing their legacy workflows, and enterprises with stringent compliance needs. Ultimately, qtrl.ai bridges the divide between the slow nature of manual testing and the complexities of traditional automation, providing a reliable pathway toward faster and more intelligent quality assurance.

Frequently Asked Questions

Agenta FAQ

Is Agenta really open-source?

Yes, Agenta is fully open-source. You can dive into the code, self-host the platform, and contribute to its development on GitHub. This model provides maximum flexibility, prevents vendor lock-in, and allows teams to customize the platform to fit their specific infrastructure and security requirements.

How does Agenta handle collaboration between technical and non-technical roles?

Agenta is built specifically for cross-functional collaboration. It provides a user-friendly, no-code web interface that allows product managers and domain experts to safely edit prompts, run evaluations, and compare experiment results without writing any code. This bridges the gap between teams, ensuring everyone works from the same centralized data and workflow.

Can I use Agenta with my existing LLM framework and model providers?

Absolutely. Agenta is designed to be model-agnostic and framework-agnostic. It seamlessly integrates with popular frameworks like LangChain and LlamaIndex, and can work with models from any provider, including OpenAI, Anthropic, Google, and open-source models from Hugging Face. You bring your own models and APIs.

What is the difference between evaluation and observability in Agenta?

Evaluation in Agenta refers to the systematic testing and scoring of prompts and models during development, typically on curated test datasets, to validate performance before deployment. Observability, on the other hand, is about monitoring the live, production application. It involves tracing real-user requests, debugging issues as they happen, and using that production data to create new tests, closing the loop between live ops and development.

qtrl.ai FAQ

What makes qtrl.ai different from traditional QA tools?

qtrl.ai uniquely combines enterprise-grade test management with progressive AI automation, allowing teams to scale their QA efforts without losing control. Unlike traditional tools, qtrl.ai enables incremental adoption of automation.

Can qtrl.ai integrate with existing tools?

Yes, qtrl.ai is built to support existing workflows and can integrate with various tools in your CI/CD pipeline. This ensures a seamless transition and enhances the overall efficiency of your QA processes.

How does adaptive memory work in qtrl.ai?

Adaptive memory in qtrl.ai accumulates knowledge from your application through test executions and issues. This ongoing learning process powers smarter, context-aware test generation, making the platform increasingly effective over time.

Is qtrl.ai suitable for teams with strict compliance needs?

Absolutely. qtrl.ai is designed with governance in mind, offering full traceability and audit trails essential for enterprises that require strict compliance and oversight in their QA processes.

Alternatives

Agenta Alternatives

Agenta is an open-source LLMOps platform designed for teams building applications powered by large language models. It centralizes the entire development workflow, from prompt experimentation to evaluation and production monitoring, into a single collaborative environment. Users often explore alternatives for various reasons. Some teams might have specific budget constraints or require a fully managed, cloud-hosted solution. Others might need deeper integrations with their existing tech stack, or their use case might be simpler, focusing on just one aspect like prompt management without the need for a full platform. When evaluating other tools, consider your team's primary need. Look for solutions that address the core challenges of LLM development: managing prompt versions, systematically testing changes, and monitoring live applications. The right fit should streamline your workflow, support collaboration, and provide the observability needed to deploy reliable LLM apps with confidence.

qtrl.ai Alternatives

qtrl.ai is a cutting-edge QA platform that empowers software teams to enhance their quality assurance processes through AI-driven automation while maintaining full control and governance. As part of the automation and development tools category, qtrl.ai offers a centralized hub for organizing test cases, planning test runs, and tracking quality metrics, making it a favorite among product-led engineering teams and QA groups looking to evolve from manual testing. Users often seek alternatives to qtrl.ai for various reasons, including pricing concerns, specific feature requirements, or compatibility with existing platforms. When exploring alternatives, it’s essential to consider factors like ease of integration, the flexibility of automation capabilities, and the level of support provided. A thorough evaluation of these aspects will help ensure that the selected solution aligns with your team's unique needs and enhances your quality assurance strategy.